欢迎来到 Faiss 文档

Faiss

Faiss 是一个用于高效相似性搜索和密集向量聚类的库。它包含可以在任何大小的向量集中搜索的算法,甚至可以处理那些可能无法放入 RAM 中的向量集。它还包含用于评估和参数调整的支持代码。

Faiss 使用 C++ 编写,并提供了完整的 Python 封装。 一些最有用的算法是在 GPU 上实现的。它主要在 Meta 的基础 AI 研究团队 FAIR 开发。

什么是相似性搜索?

给定一组维度为 \(d\) 的向量 \(x_i\),Faiss 会从中构建一个 RAM 中的数据结构。构建结构后,当给定一个维度为 \(d\) 的新向量 \(x\) 时,它会有效地执行以下操作:

其中 \(\lVert\cdot\rVert\) 是欧几里得距离 (\(L^2\))。

在 Faiss 术语中,数据结构是一个 *索引*,一个具有 *add* 方法来添加 \(x_i\) 向量的对象。 请注意,\(x_i\) 假设是固定的。

计算 argmin 是索引上的 *search* 操作。

这就是 Faiss 的全部内容。它还可以

不仅返回最近的邻居,还返回第二近、第三近、……、第 k 近的邻居

一次搜索多个向量,而不是一次搜索一个(批量处理)。 对于许多索引类型,这比一个接一个地搜索向量更快

以精度换取速度,即,以 10 倍的速度或使用 10 倍更少的内存的方法,给出 10% 的不正确结果

执行最大内积搜索 \(argmax_i \langle x, x_i \rangle\) 而不是最小欧几里德搜索。对其他距离(L1、Linf 等)也有有限的支持。

返回查询点给定半径内的所有元素(范围搜索)

将索引存储在磁盘上而不是在 RAM 中。

索引二进制向量而不是浮点向量

根据向量 ID 上的谓词忽略索引向量的子集。

安装

推荐的安装 Faiss 的方法是通过 Conda

$ conda install -c pytorch faiss-cpu

faiss-gpu 包提供 CUDA 启用的索引

$ conda install -c pytorch faiss-gpu

请注意,应该安装其中一个包,而不是同时安装两个包,因为后者是前者的超集。

Faiss 的研究基础

Faiss 基于多年的研究。 最值得注意的是,它实现了

来自 “Video google: A text retrieval approach to object matching in videos.”, Sivic & Zisserman, ICCV 2003 的倒排文件。 这是大型数据集中非穷举搜索的关键。 否则,所有搜索都需要扫描索引中的所有元素,即使为每个元素应用的操作很快,这也是令人望而却步的。

来自 “Product quantization for nearest neighbor search”, Jégou & al., PAMI 2011 的乘积量化 (PQ) 方法。 这可以被看作是高维向量的一种有损压缩技术,允许在压缩域中进行相对准确的重建和距离计算。

来自 “Searching in one billion vectors: re-rank with source coding”, Tavenard & al., ICASSP’11 的三级量化 (IVFADC-R aka IndexIVFPQR) 方法。

来自 “The inverted multi-index”, Babenko & Lempitsky, CVPR 2012 的倒排多索引。 这种方法大大提高了倒排索引的速度,适用于快速/不太精确的操作点。

来自 “Optimized product quantization”, He & al, CVPR 2013 的优化 PQ。 这种方法可以被看作是向量空间的线性变换,使其更适合使用乘积量化器进行索引。

来自 “Polysemous codes”, Douze & al., ECCV 2016 的乘积量化器距离的预过滤。 这种技术在计算 PQ 距离之前执行二进制过滤阶段。

GPU 实现和快速 k 选择在 “Billion-scale similarity search with GPUs”, Johnson & al, ArXiv 1702.08734, 2017 中描述

来自 “Efficient and robust approximate nearest neighbor search using Hierarchical Navigable Small World graphs”, Malkov & al., ArXiv 1603.09320, 2016 的 HNSW 索引方法

来自 “Quicker ADC : Unlocking the Hidden Potential of Product Quantization with SIMD”, André et al, PAMI’19 的寄存器内向量比较,也用于 [“Accelerating Large-Scale Inference with Anisotropic Vector Quantization” <https://arxiv.org/abs/1908.10396>`_, Guo, Sun et al, ICML’20.

来自 “Fast Search in Hamming Space with Multi-Index Hashing”, Norouzi et al, CVPR’12 的二进制多索引哈希方法。

来自 “Fast Approximate Nearest Neighbor Search With The Navigating Spreading-out Graph” <https://arxiv.org/abs/1707.00143>`_, Cong Fu et al, VLDB 2019 的基于图的索引方法 NSG。

来自 “Revisiting additive quantization”, Julieta Martinez, et al. ECCV 2016 和 “LSQ++: Lower running time and higher recall in multi-codebook quantization”, Julieta Martinez, et al. ECCV 2018 的局部搜索量化方法。

来自 “Improved Residual Vector Quantization for High-dimensional Approximate Nearest Neighbor Search”, Shicong Liu et al, AAAI’15 的残差量化器实现。

一篇关于乘积量化和相关方法的通用论文:“A Survey of Product Quantization”, Yusuke Matsui, Yusuke Uchida, Hervé Jégou, Shin’ichi Satoh, ITE transactions on MTA, 2018。

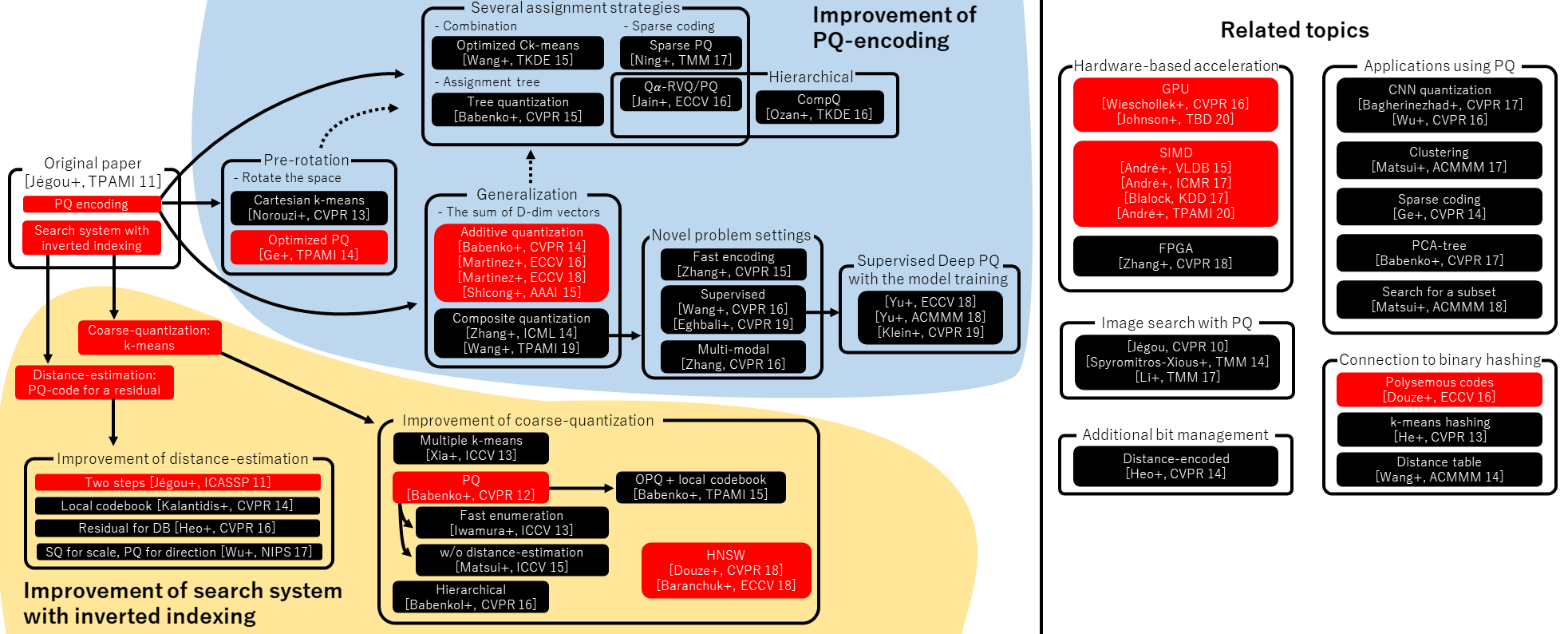

下面的概述图像来自该论文(单击图像放大它)

图片来源:Yusuke Matsui,感谢您允许我们使用它!

Faiss 中实现的方法以红色突出显示。

所有参考文献的关键

André+, “Cache Locality is not Enough: High-performance Nearest Neighbor Search with Product Quantization Fast Scan”, VLDB 15 André+, “Accelerated Nearest Neighbor Search with Quick ADC”, ICMR 17 André+, “Quicker ADC : Unlocking the Hidden Potential of Product Quantization with SIMD”, IEEE TPAMI 20 Babenko and Lempitsky, “The Inverted Multi-index”, CVPR 12 Babenko and Lempitsky, “Additive Quantization for Extreme Vector Compression”, CVPR 14 Babenko and Lempitsky, “The Inverted Multi-index”, IEEE TPAMI 15 Babenko and Lempitsky, “Tree Quantization for Large-scale Similarity Search and Classification”, CVPR 15 Babenko and Lempitsky, “Efficient Indexing of Billion-scale Datasets of Deep Descriptors”, CVPR 16 Babenko and Lempitsky, “Product Split Trees”, CVPR 17 Bagherinezhad+, “LCNN: Lookup-based Convolutional Neural Network”, CVPR 17 Baranchuk+, “Revisiting the Inverted Indices for Billion-Scale Approximate Nearest Neighbors”, ECCV 18 Blalock and Guttag, “Bolt: Accelerated Data Mining with Fast Vector Compression”, KDD 17 Eghbali and Tahvildari, “Deep Spherical Quantization for Image Search”, CVPR 19 Douze+, “Polysemous Codes”, ECCV 16 Douze+, “Link and code: Fast Indexing with Graphs and Compact Regression Codes”, CVPR 18 Ge+, “Optimized Product Quantization”, IEEE TPAMI 14 Ge+, “Product Sparse Coding”, CVPR 14 He+, “K-means Hashing: An Affinity-preserving Quantization Method for Learning Binary Compact Codes”, CVPR 13 Heo+, “Short-list Selection with Residual-aware Distance Estimator for K-nearest Neighbor Search”, CVPR 16 Heo+, “Distance Encoded Product Quantization”, CVPR 14 Iwamura+, “What is the Most Efficient Way to Select Nearest Neighbor Candidates for Fast Approximate Nearest Neighbor Search?”, ICCV 13 Jain+, “Approximate Search with Quantized Sparse Representations”, ECCV 16 Jégou+, “Product Quantization for Nearest Neighbor Search”, IEEE TPAMI 11 Jégou+, “Aggregating Local Descriptors into a Compact Image Representation”, CVPR 10 Jégou+, “Searching in One Billion Vectors: Re-rank with Source Coding”, ICASSP 11 Johnson+, “Billion-scale Similarity Search with GPUs”, IEEE TBD 20 Klein and Wolf, “End-to-end Supervised Product Quantization for Image Search and Retrieval”, CVPR 19 Kalantidis and Avrithis, “Locally Optimized Product Quantization for Approximate Nearest Neighbor Search”, CVPR 14 Li+, “Online Variable Coding Length Product Quantization for Fast Nearest Neighbor Search in Mobile Retrieval”, IEEE TMM 17 Martinez+, “Revisiting Additive Quantization”, ECCV 16 Martinez+, “LSQ++: Lower Running Time and Higher Recall in Multi-codebook Quantization”, ECCV 18 Matsui+, “PQTable: Fast Exact Asymmetric Distance Neighbor Search for Product Quantization using Hash Tables”, ICCV 15 Matsui+, “PQk-means: Billion-scale Clustering for Product-quantized Codes”, ACMMM 17 Matsui+, “Reconfigurable Inverted Index”, ACMMM 18 Ning+, “Scalable Image Retrieval by Sparse Product Quantization”, IEEE TMM 17 Norouzi and Fleet, “Cartesian k-means”, CVPR 13 Ozan+, “Competitive Quantization for Approximate Nearest Neighbor Search”, IEEE TKDE 16 Spyromitros-Xious+, “A Comprehensive Study over VLAD and Product Quantization in Large-scale Image Retrieval”, IEEE TMM 14 Yu+, “Product Quantization Network for Fast Image Retrieval”, ECCV 18 Yu+, “Generative Adversarial Product Quantization”, ACMMM 18 Wang+, “Optimized Distances for Binary Code Ranking”, ACMMM 14 Wang+, “Optimized Cartesian k-means”, IEEE TKDE 15 Wang+, “Supervised Quantization for Similarity Search”, CVPR 16 Wang and Zhang, “Composite Quantization”, IEEE TPAMI 19 Wieschollek+, “Efficient Large-scale Approximate Nearest Neighbor Search on the GPU”, CVPR 16 Wu+, “Multiscale Quantization for Fast Similarity Search”, NIPS 17 Wu+, “Quantized Convolutional Neural Networks for Mobile Devices”, CVPR 16 Xia+, “Joint Inverted Indexing”, ICCV 13 Zhang+, “Composite Quantization for Approximate Nearest Neighbor Search”, ICML 14 Zhang+, “Sparse Composite Quantization”, CVPR 15. Zhang+, “Collaborative Quantization for Crossmodal Similarity Search”, CVPR 16 Zhang+, “Efficient Large-scale Approximate Nearest Neighbor Search on OpenCL FPGA”, CVPR 18